In a 2011 article in Modern Language Journal, Elana Shohamy, a language assessment specialist at the University of Tel Aviv, addresses the question of whether language tests can be multilingual. To answer this question, Shohamy explains that tests need to meet two criteria to be valid: first, they have to measure a construct, such as “language skills for academic studies and the workplace”; second, they have to accurately measure that construct through the design of a well-made test. Shohamy argues that even though test-makers have devoted great attention to test design (#2), they get the construct wrong (#1). This is because they equate “language skills for academic studies and the workplace” with monolingual performance, and this is not how multilinguals function in their studies and at work… including multilinguals who are successful in these domains. Therefore, she proposes how language tests can incorporate multilingual elements to more accurately assess the language performance of multilinguals as well as monolinguals, if the tests’ goal is indeed to assess academic and employment readiness, rather than create social barriers. These reforms are not only possible, but in fact essential, for construct validity.

Shohamy, E. (2011). Assessing multilingual competencies: Adopting construct valid assessment policies. The Modern Language Journal, 95(3), 418-429. https://doi.org/10.1111/j.1540-4781.2011.01210.x

Shohamy begins by reflecting on a standardized test project she was involved in (1999-2003) that sought to develop a national language test in Israel to assess the academic achievements of native Hebrew speakers compared to immigrants from Ethiopia and the former USSR. The question the research sought to answer was “how long” it took for these multilinguals to catch up to their monolingual peers in academic performance. The answer was a decade (9-11 years) for the immigrants from the former USSR, and the Ethiopian students never closed the gap, not even in the second generation.

In this MLJ 2011 article, Shohamy reflects on both the validity and the ethics of that research. In retrospect, the test takers probably used multilingual strategies and translingual communicative practices to get by, and even succeed, at university, in trade schools, and in a wide variety of workplaces… within communities of practice that were just as likely to be multilingual than monolingual, contributing as much to national growth and development as communities of practice that were monolingual. Practically speaking, the monolingual tests did not fully measure the multilingual test takers’ capacity to succeed at school and work, but the tests had the consequences of (1) inadequately measuring knowledge and literacy skills test takers had in their first languages (L1s), (2) negatively affecting their academic confidence and self-concept, and (3) holding them back from educational opportunities, or at least delaying their attainment of their educational and career goals. Taking this one testing situation that she played a key role in, Shohamy reflects:

The expectation to demonstrate academic knowledge via national languages is the dominant model everywhere. The use of a dominant national language as the means of demonstrating academic achievement on tests is an example of buying into research designs that fit national language ideologies of one nation, one language, and hence mask the real trait that is the target of the measurement [i.e., academic and workplace preparedness]. (p. 419)

The issue she highlights is not a matter of liberalism or political correctness. It is about the real inaccuracy of language proficiency tests to measure what they purport to measure when they define all language use in post-secondary education and in trades/careers as monolingual and native-like. In her article, Shohamy examines the causes and consequences of this fallacy. Then, she provides recommendations for language test developers to increase their tests’ construct validity.

Monolingual tests: Do they really measure what they are supposed to measure?

While translanguaging in classrooms has become more widely accepted over the last decade, language tests have remained monolingual because tests reflect large-scale nationalist ideologies; that is, they are “used mostly by central authorities as an ideological tool for the creation of national and collective identities” (p. 420). For example, when it comes to tests for national citizenship, despite (or perhaps because of?) the waves of immigration to developed countries from other parts of the world, such tests perpetuate the ideology of “one nation, one language” to maintain a certain national image.

Due to ideologies of nationalism, the children of immigrants are subject to monolingual standardized tests in K-12 education, from those at the level of the country (e.g., No Child Left Behind in the USA) to those on an international scale such as PISA (Programme for International Student Assessment), which compares the educational achievements of students in different countries. Meanwhile, adults are subject to language proficiency frameworks like the European Union’s CEFR (Common European Framework of Reference for languages), which have gatekeeping functions across the professions. In the USA, the ACTFL (American Council on the Teaching of Foreign Languages) series of language tests serves gatekeeping functions for study abroad or the foreign service, and countries’ real translanguaging practices come as a shock to Americans who train for monolingual environments prior to leaving the United States—see, for example, Mori and Sanuth’s (2018) research on American learners of Yorùbá doing an eight-week soujourn in Nigeria.

Now, in order to be valid, tests that measure academic and workplace readiness should mirror to an adequate extent “real life language activities” (Shohamy, 2009). If we add the caveat “…except those activities that are multilingual,” we lose a significant degree of validity. Ideologies of “one nation, one language” do not capture the reality of real language use anywhere—here, Shohamy cites critics of monolingualism such as Creese and Blackledge (2010), García and Sylvan (2011), Li Wei and Martin (2009), Menken (2008, 2009) and Makoni and Pennycook (2006). Many of these scholars have pointed out that standardized tests at the K-12 level (e.g., No Child Left Behind and PISA) are intended to measure academic ability rather than language skills specifically, yet emergent bilinguals (e.g., students who have recently moved to a new country and are just starting to develop their second language, or L2) and simultaneous bilinguals (e.g., Latinx students in New York who have grown up speaking Spanish and English together in social and later academic domains) cannot demonstrate their full academic competence on such tests. In other cases (e.g., CEFR, ACTFL), language skills are the focus of measurement on the tests, and the tests act as a proxy for university and workplace readiness—e.g., how effectively people would deal with reading an academic article or communicating with co-workers/colleagues. However, since the tests are monolingual, “testers do not design tests that are based on the reality of how languages are really used and learned… but rather take the side of the ideological institutions” (p. 426).

Such monolingual tests overlook strong research findings that show immigrants continue to use their whole language repertoires in academic studies or in the workplace long after immigration (Thomas & Collier, 2002), no matter how proficient they become in the national language. Whether they are children, teenagers, or adults, multilinguals transfer knowledge and conceptual information across languages (Haim, 2010), which speeds up learning in all their languages (Cummins, 1991, 2005). “How long it takes them” to function monolingually and in a native-like way, down to their mental processing, when in practical terms they may never do so—nor ever need to do so—makes us consider “whether this is even a legitimate question to ask” (Shohamy, 2011, p. 422).

Another issue of (il)legitimate differentiation arises when it comes to test items that contain idiomatic expressions or (pop) cultural references that foreigners struggle with, regardless of their proficiency in the language. Shohamy points out that Differential Item Functioning (DIF) is a statistical technique that can be used to identify test questions like these (p. 424), yet test makers may neglect to do DIF to check whether such items exist in their tests, or worse, purposely include these items, making such cultural knowledge a “must” on high-stakes examinations. However, this begs the question of whether it is really important for a test taker to know “mainstream” (pop) cultural knowledge (Duff, 2002; Norton Peirce, 1995) to succeed in their academic studies or in the workplace. Not to say that such knowledge is not useful, but there is robust research suggesting that “mainstream” is a myth—immigrants and sojourners have a wide variety of communities they can participate in, nor is there necessarily a need to pick just one, and people develop networks and constellations of cultural knowledge in ways that are highly individual (Malsbary, 2012; Surtees & Balyasnikova, 2016; Zappa-Hollman & Duff, 2015).

In addition, if tests truly aim to measure constructs such as academic and workplace readiness, they have to consider what it is multilinguals actually do when faced with academic and professional tasks. For example, when multilinguals process an academic text in one of their languages, they often use electronic dictionaries and write bi/multilingual notes on the text. It is not a temporary coping mechanism, used only until they reach adequate proficiency in their second language (L2); we see people who are academically fluent in L2 doing this throughout their professional careers, to manage their private thinking. It is therefore necessary to see students’ processing of test items bi/multilingually “as a more valid construct and not as the ‘route to monolingualism'” (Shohamy, 2011, p. 425). This is just one example Shohamy gives to suggest what multilinguals do differently from monolinguals, and what tests should allow if they genuinely aim to measure everyone’s academic and workplace readiness. Measuring multilinguals’ performance when forced to function monolingually doesn’t measure actual behavior beyond the test. Instead, there are ways to invite multilingual competencies in the design of tests of language and literacy with more construct validity.

How translanguaging can be incorporated into (standardized) tests to improve construct validity

When it comes to proposals for multilingual assessment, Shohamy draws on the two types of multilingual pedagogy that are the two ends of a continuum: (1) different languages for different purposes (e.g., read an editorial in Welsh, discuss meaning and opinions in English, and plan in English to write a response in Welsh), and (2) dynamic translanguaging (e.g., discuss opinions on an issue in a mix of Spanish and English, “without regard for watchful adherence to the socially and politically defined boundaries of named (and usually national and state) languages”; Otheguy, García, & Reid, 2015, p. 283, italics in original). The choices test-takers make in task design should reflect what people do with that task in real life:

On one side of the continuum, language x is used for certain purposes, such as reading, whereas language y is used for writing, and language z is used for discussions. In many classes in Arab schools in Israel, a text in history is read in English, students are asked to summarize the article in Hebrew [the language in which they have received schooling and are likely most literate in], and the oral discussion takes places in Arabic, either Modern Standard or a spoken dialect [which they speak at home]. The extent to which these three (or four, including the Arabic varieties) languages, used in the same space, are actually kept homogeneous and separate is probably too idealistic and unreal. On the other extreme side of the continuum, tests are based on the approach in which a mixture of languages and open borders among them is a recognized, accepted, and encouraged variety. (p. 427)

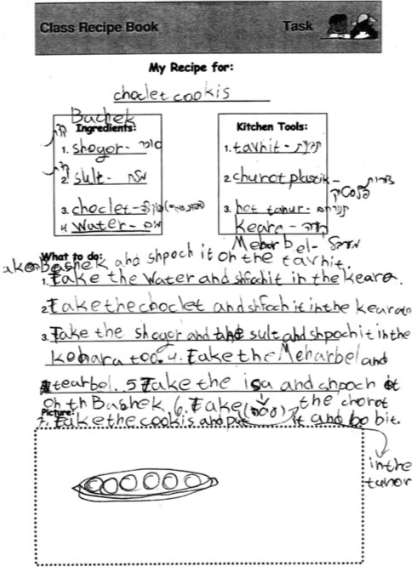

In many contexts—and this is something Shohamy doesn’t discuss—the formal classroom assessments cannot be multilingual because the teachers are not multilingual. This is the case in many English-dominant countries. However, in other contexts, the teachers are in fact bi/multilingual in the same language(s) as the students, and in such cases, teacher-made multilingual assessments to measure learning of a subject can feasibly be used, at either end of the continuum, and everywhere in between, to capture how students would actually use their languages in real life. [In fact, such assessments are probably used more often than is reported in the research literature, perhaps more so at the elementary level, even though bi/multilingual annotations may appear in handouts and activities until the secondary level in many classrooms around the world.] Here, for example, is an authentic demonstration of dynamic translanguaging in a process text:

In the above image from the article, of an elementary student’s bi/multilingual recipe, note that many lexical items for kitchen implements and cookie ingredients are in Hebrew (the student’s dominant L1), whereas common procedural terms like “take the,” conjunctions like “and,” and prepositional phrases like “in the” are in English. Hence, the learner here is likely drawing from what they know without use of an electronic dictionary (English limited to very common phrases), but that device can be allowed to help the student expand their repertoire and give them more choice of expression. It may be helpful, for example, for teachers to begin with a recipe-writing activity in which students translanguage freely but without a dictionary (diagnostic assessment), then one in which students can collaboratively write recipes with electronic dictionary use (learning task), and finally another activity in which they individually write recipes with or without an electronic dictionary (summative assessment), depending on the learning outcomes that are to be assessed.

When it comes to standardized tests at the national level, there are also multilingual possibilities. For example, in the middle of the continuum, Shohamy observes that math tests can present questions bilingually: “Although on the surface, this version of a test seems to follow the approach of multilingual tests with two homogeneous languages, when students were asked about the process they followed in responding to the text, they admitted to using a bilingual pattern of thinking while responding. Specifically, they claimed that they took some words from the Russian version, understood the syntax from the Hebrew version, and combined both in the process of meaning-making” (p. 427). Students can also be provided with bi/multilingual translations of key words and glossaries in the margin of the text, and/or be allowed electronic dictionaries when taking the examination. Even if the students’ written or oral output needs to be monolingual for the markers or judges, in-class preparation to create this output does not need to be monolingual, and such preparation is often more effective if bi/multilingual strategies are used. The goal is to keep in mind what actually will happen in the real life situations the tests supposedly reflect.

To conclude, Shohamy writes that “there is a need to carry out research that looks deeper into meaning construction of bilingual/multilingual test-takers in different settings” (p. 428). Their meaning construction involves the entire language repertoire, as well as various multimodal resources, which can also be included or permitted on tests to provide contextual cues or serve as additional resources, as they might in real life situations. Such adaptations to tests, in Shohamy’s view, would provide a fuller simulation of authentic tasks and, in turn, more valid test scores.

References

Creese, A., & Blackledge, A. (2010). Translanguaging in the bilingual classroom: A pedagogy for learning and teaching? Modern Language Journal, 94, 103– 115.

Cummins, J. (1991). Interdependence of first-and second-language proficiency in bilingual children. In E. Bialystok (Ed.), Language processing in bilingual children (pp. 70-89). Cambridge University Press.

Cummins, J. (2005). A proposal for action: Strategies for recognizing heritage language competence as a learning resource within the mainstream classroom. Modern Language Journal, 89(4), 585-592. https://www.jstor.org/stable/3588628

Duff, P. (2002). Pop culture and ESL students: Intertextuality, identity, and participation in classroom discussions. Journal of Adolescent & Adult Literacy, 45(6), 482-487. https://www.jstor.org/stable/40014736

García, O., & Sylvan, C. E. (2011). Pedagogies and practices in multilingual classrooms: Singularities in pluralities. The Modern Language Journal, 95(3), 385-400. https://doi.org/10.1111/j.1540-4781.2011.01208.x

Haim, O. (2010). The relationship between Academic Proficiency (AP) in first language and AP in second and third languages. PhD dissertation. Tel Aviv University.

Li Wei, & Martin, P. (2009). Conflicts and tensions in classroom codeswitching: An introduction. International Journal of Bilingual Education and Bilingualism, 12(2), 117-122. https://doi.org/10.1080/13670050802153111

Makoni, S., & Pennycook, A. (Eds.) (2006). Disinventing and reconstituting languages. Clevedon, UK: Multilingual Matters.

Malsbary, C. B. (2012). “Assimilation, but to what mainstream?”: Immigrant youth in a super-diverse high school. Encyclopaideia, 16(33), 89-112.

Menken, K. (2008). English learners left behind: Standardized testing as language policy. Clevedon, UK: Multilingual Matters.

Menken, K. (2009). High-stakes tests as de facto language education policies. In E. Shohamy & N. H. Hornberger (Eds.), Encyclopedia of language and education: Vol. 7. Language testing and assessment (2nd ed., pp. 401–414). Berlin, Germany: Springer.

Mori, J., & Sanuth, K. K. (2018). Navigating between a monolingual utopia and translingual realities: Experiences of American learners of Yorùbá as an additional language. Applied Linguistics, 39(1), 78-98. https://doi.org/10.1093/applin/amx042

Norton Peirce, B. (1995). Social identity, investment, and language learning. TESOL Quarterly, 29(1), 9-31. https://doi.org/10.2307/3587803

Otheguy, R., García, O., & Reid, W. (2015). Clarifying translanguaging and deconstructing named languages: A perspective from linguistics. Applied Linguistics Review, 6(3), 281-307. https://doi.org/10.1515/applirev-2015-0014

Shohamy, E. (2009). Introduction to Volume 7: Language testing and assessment. In E. Shohamy & N. H. Hornberger (Eds.), Encyclopedia of language and education: Vol. 7. Language testing and assessment (2nd ed., pp. xiii–xxii). Berlin, Germany: Springer.

Surtees, V., & Balyasnikova, N. (2016). Culture clubs in Canadian higher education: Examining membership diversity. Canadian Journal for New Scholars in Education/Revue canadienne des jeunes chercheures et chercheurs en éducation, 7(1). https://journalhosting.ucalgary.ca/index.php/cjnse/article/view/30680

Thomas, W., & Collier, V. (2002). A national study of school effectiveness for language minority students’ long-term academic achievement: Final report. Project 1.1. Santa Cruz, CA: Center for Research on Education, Diversity and Excellence (CREDE).

Zappa‐Hollman, S., & Duff, P. A. (2015). Academic English socialization through individual networks of practice. TESOL Quarterly, 49(2), 333-368. https://doi.org/10.1002/tesq.188

2 thoughts on “Is translanguaging possible on (standardized) tests?”

Comments are closed.